Loading

Your Generative Engine Optimisation strategy probably treats all AI platforms the same. Most organisations do. They optimise once, deploy everywhere, and assume that what works on ChatGPT works on Perplexity, Google AI Overviews, Claude, Gemini, Grok, and Google AI Mode alike.

It doesn't. And that assumption is costing you visibility on most of them.

AI-generated responses now trigger on roughly 48% of all tracked queries globally, rising to 82% in B2B technology searches. Brands cited inside AI summaries earn 35% more organic clicks and 91% more paid clicks than those excluded. Yet a uniform approach to GEO achieves meaningful visibility on one or two platforms whilst leaving you invisible on the rest.

For Swiss organisations, this isn't theoretical. Google AI Overviews and AI Mode are both live in Switzerland across German, French, Italian, and English. Your customers are already receiving AI-generated answers. The question is whether your brand appears in them.

Why AI platforms aren't interchangeable

Traditional search engines share broadly similar architectures. Domain authority, backlinks, and content relevance matter on Google, Bing, and DuckDuckGo alike. Optimising for one largely optimises for all. AI platforms diverge in ways that matter far more.

The differences run deep. ChatGPT draws primarily from training data and favours thorough, authoritative content. Perplexity operates as a real-time search engine that weights community validation and recency. Google AI Overviews maintain deep integration with traditional E-E-A-T signals. Claude prioritises reasoning depth and original insights. Gemini leans on structured data and multimodal content. LinkedIn has emerged as a major citation source through verified professional identity. Grok checks X (formerly Twitter) in real time before crawling the web. And Google AI Mode, a dedicated conversational search tab distinct from AI Overviews, breaks complex queries into parallel sub-searches using its Query Fan-out architecture.

What does this mean in practice? Content that earns a prominent citation on ChatGPT may be entirely invisible on Perplexity, and vice versa. Take brand mention rates. Studies put ChatGPT's at somewhere between 74% and 99% of responses, depending on methodology. Google AI Overviews sit at around 6%. That gap alone tells you why a uniform strategy fails.

Eight platforms, eight different logics

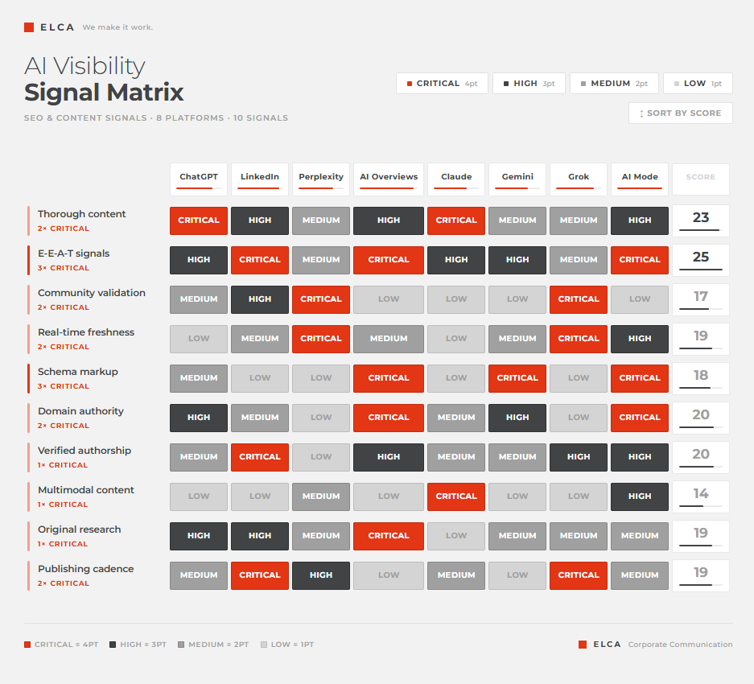

The platform signal heatmap

We've synthesised this analysis into a signal heatmap showing how ten key optimisation factors perform across all eight platforms. Use it to decide where to invest your GEO resources.

No single signal is "Critical" across all eight platforms. Community validation is decisive for Perplexity and Grok but irrelevant for Claude and AI Overviews. Schema markup is critical for AI Overviews, Gemini, and AI Mode but carries minimal weight elsewhere. Original research is the strongest signal for Claude but only a medium factor on most other platforms. Real-time freshness is decisive for Perplexity and Grok but negligible for ChatGPT and Claude. A uniform strategy inevitably over-invests in some areas whilst neglecting others entirely.

What this means for your strategy

Effective GEO in 2026 requires three things. First, visibility auditing: you need to know where your brand currently appears and where it doesn't across all eight platforms. Second, platform prioritisation: not all platforms carry equal business value for your organisation, and resource allocation should reflect where your audience actually interacts with AI. Third, platform-aligned content: the same insight may need to be packaged as a thorough guide for ChatGPT, a LinkedIn Article for professional visibility, a community contribution for Perplexity, a structured data asset for AI Overviews, and an X thread for Grok.

And this isn't a one-time exercise. AI platform citation behaviours are volatile. Changes of 4 to 5x in platform priorities have happened within single quarters. LinkedIn's rise as a citation source was barely visible twelve months ago. Grok and Google AI Mode didn't feature in any GEO framework a year ago. Quarterly monitoring and recalibration aren't optional.

Frequently asked questions

- What is platform-specific GEO?

Platform-specific GEO treats each AI platform as a distinct channel rather than a single thing to be optimised in bulk. The eight platforms that matter today (ChatGPT, Perplexity, Google AI Overviews, Claude, Gemini, LinkedIn, Grok, and Google AI Mode) evaluate and cite content using different signals. Content that earns prominent placement on one can be invisible on another. The work is closer to running parallel content strategies than running one strategy that scales.

- Why doesn't optimising for ChatGPT cover all AI search?

Because brand mention rates differ by an order of magnitude across platforms. ChatGPT cites brands in somewhere between 74% and 99% of responses depending on methodology. Google AI Overviews sit at around 6%. The inputs driving those mentions are equally different: ChatGPT favours authoritative depth, Perplexity weights community validation and recency, AI Overviews lean on E-E-A-T and schema markup, Grok pulls primarily from real-time X activity. A ChatGPT-first strategy gets you visibility on ChatGPT. It doesn't get you visibility anywhere else.

- Which AI platforms should Swiss organisations prioritise?

Priority depends on where your audience actually engages with AI, but for most Swiss B2B organisations the core set is consistent. Google AI Overviews and AI Mode are live in Switzerland across all four national languages and reach the broadest audience. ChatGPT still represents roughly 60% of AI search. Gemini has scaled to 750M+ monthly active users and is embedded across Google Workspace. LinkedIn has become the second most-cited source for B2B topics, which surprised most of us in the GEO space. Perplexity and Claude matter for narrower professional and research audiences. Where to start is a different question, and that depends on a current visibility audit.

- How often should we re-audit our GEO performance across platforms?

Quarterly at minimum. Citation behaviours have shifted by 4-5x inside single quarters. LinkedIn's rise as a major citation source, Grok's launch, and Google AI Mode each reshaped GEO priorities inside twelve months. Annual audits leave you months behind. Where AI-driven discovery is a meaningful share of the funnel, continuous monitoring beats fixed-cadence reviews.

- How is platform-specific GEO different from traditional multilingual SEO?

Multilingual SEO adapts content for different languages on search engines that work broadly the same way. Platform-specific GEO adapts content for AI systems that work very differently from each other. Training data, weighting of community versus authority signals, treatment of structured data, recency requirements: all of these shift between platforms. The work is closer to omnichannel content strategy than to translation.

- How do we know whether our platform-specific GEO is working?

Traditional SEO metrics like rankings, organic traffic, and backlinks were built for a search world AI is rapidly replacing. The metric that matters now is Share of Model: how often your brand surfaces inside AI-generated responses across the platforms your audience uses. Brand mentions correlate 0.664 with AI visibility, roughly three times more strongly than backlinks at 0.218. We dig into this properly in the next article.

The bigger question

Understanding platform differences is essential context. But it raises a more pointed question: if each AI engine behaves differently, and citation behaviours shift quarterly, how do you systematically measure your brand's presence across all of them?

Traditional SEO metrics (rankings, organic traffic, backlinks) were designed for a search world that's being rapidly replaced. The metric that matters now is how often your brand gets surfaced inside AI systems: your Share of Model. Brand mentions correlate 0.664 with AI visibility, three times more strongly than backlinks at 0.218. We'll explore this concept, and what it means for measurement and strategy, in our next article.

Platform specificity in 2026 | ELCA Digital Agency

This article covers the key findings.

Go deeper - Download the full whitepaper !

Platform specificity in 2026

Why your oneone-sizesize-fitsfits-all GEO strategy is failing ?

The article above covers the key findings. The full whitepaper includes detailed analysis of each platform's citation behaviour, the complete signal heatmap with strategic implications, platform user data and growth trajectories, and a four-step framework for platform-specific GEO.

Want to understand where your brand stands across AI platforms?

Contact ELCA's GEO experts for a platform visibility assessment.

Roger Zimmermann

Expert in Digital Marketing

Meet Roger Zimmermann, our Expert specializing in Referencement and Digital Marketing.

Sources

- Semrush (2025–2026), AI citation analysis across 230,000+ queries. LinkedIn citation growth data: Semrush platform analysis, mid-2025 to late 2025. AI Overview global penetration: approximately 48% of all tracked queries, 82% in B2B Tech (BrightEdge, February 2026).

- AI Overview monthly users: over two billion (Google, 2026).

- CTR impact of AI summaries: brands cited earn 35% more organic clicks, 91% more paid clicks (Semrush, 2025).

- ChatGPT usage data: OpenAI / TechCrunch (February 2026), 900M weekly active users, 50M+ paying subscribers.

- ChatGPT market share: 60.2% (First Page Sage, April 2026).

- Gemini monthly active users: 750M+ as of Q4 2025 (Alphabet Q4 2025 earnings, February 2026).

- Brand mention correlation with AI visibility: 0.664 (Ahrefs / Profound, 2025).

- Google AI Mode: launched Switzerland October 2025, 75M+ daily active users, 1B+ queries/month (Google, 2025–2026).

- Brand mention frequency: ChatGPT 74–99% depending on methodology (Columbia Journalism Review, Profound, 2025–2026); AI Overviews approximately 6% (Profound / industry analysis, 2026).